October 28, 2019

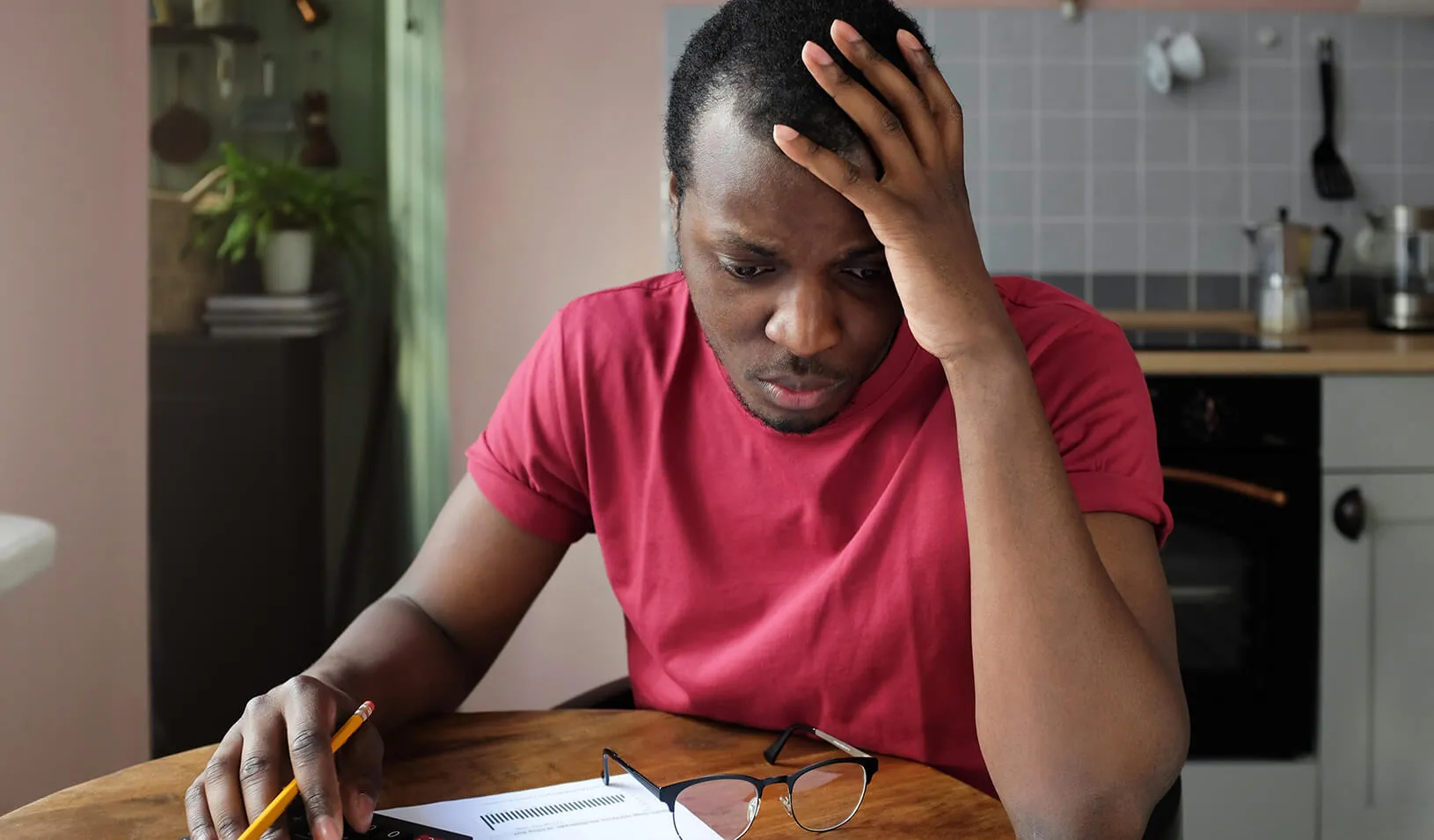

| by Katia SavchukDiscrimination in the U.S. credit market is well documented. Historically, minorities have disproportionately been denied loans, mortgages, and credit cards, or charged higher rates than other customers. Now that artificial intelligence is taking over many credit decisions — and taking human bias out of the equation — it’ll be easy to enforce laws against discrimination in lending, right?

Not necessarily, argues Jann Spiess, an assistant professor of operations, information, and technology at Stanford Graduate School of Business. In a recent paper in The University of Chicago Law Review, he and Talia Gillis, a doctoral student at Harvard Business School and Harvard Law School, examined what happens when existing anti-discrimination rules are applied to choices made by machines.

“The standard regulations in mortgage and credit decisions are too focused on the role of the human in the process,” Spiess says. “They’re ill-equipped to deal with algorithmic decisions.”

Theoretically, Spiess says, one of the most promising aspects of using algorithms in lending is that you can remove from the equation characteristics, such as race and gender, that are regarded as “protected,” meaning that federal law prohibits lenders from considering such variables in their decision-making.

But Spiess and his coauthor found that excluding race from the algorithmic models did nothing to close the gap in predicted default rates among white, Black, and Hispanic borrowers. One reason is that race tends to correlate with many other characteristics, such as an applicant’s neighborhood. But the researchers found that eliminating 10 other factors that correlated the most with race, such as education level and property type, only slightly reduced the disparity in default rate predictions.

The Downside of Filtering Out Inputs

“In a world where we have lots of information about every individual and a powerful machine to squeeze out a signal, it’s possible to reconstruct whether someone is part of a protected group even if you exclude that variable,” Spiess says. “And because the rules are so complex, it’s really hard to understand which input caused a certain decision. So it’s of limited use to forbid inputs.”

Including factors like race in an algorithm’s decision may actually lead to less discriminatory outcomes, Spiess argues: “If a group of people historically didn’t have access to credit, their credit score might not reflect that they’re creditworthy.” By openly including a factor such as race in the equation, the algorithm can be designed in such cases to give less weight to an applicant’s credit score, thus removing the invisible but inherent racial bias that would otherwise exist.

Although eliminating race, gender, or other characteristics from the model doesn’t produce a quick fix, algorithm decision-making does offer a different kind of opportunity to reduce discrimination, Spiess says. Instead of trying to untangle how an algorithm makes decisions, regulators can focus on whether it leads to fair outcomes.

“We could run a stress test — a simulation — before an algorithm is used on real people to see whether it conforms to certain regulations,” Spiess says. “That wouldn’t be possible with a human, since we could never know they’d perform the same way in other cases.”

The researchers used data drawn from successful mortgage applicants in Boston in 1990, collected under the Home Mortgage Disclosure Act. They selected the dataset, nearly 30 years old, because it was readily available and included demographic information about applicants, along with the interest rates they received on home loans. Based on those rates, the researchers simulated the probability of default that lenders would have assigned to each applicant. They then used this data set to train a machine-learning model similar to one that lenders could use today to make decisions about new applicants.

Should Credit-Score Algorithms Be Certified?

Spiess and Gillis envision a government clearinghouse that certifies algorithms as providing fair decisions, so each company isn’t left to its own devices when coming up with formulas. That would require regulators to think carefully about the population sample used in testing, the researchers argue. They would need to use a local, rather than national, sample if they want to understand how a rule would work in the area where a lender operates, and they’d need to use the same sample to compare different lenders.

This model would also require regulators to define which decisions are considered non-discriminatory. For example, is it best if an algorithm errs on the side of giving credit to people who don’t deserve it, or should it err on the side of fiscal caution?

“It’s not just a matter of mathematics,” Spiess says. “There are clearly tradeoffs that we as a society have to resolve by coming together and saying what we expect of an algorithm. Existing regulations just don’t include those answers yet.”

Coming up with those answers will require researchers to bridge divides across disciplines like mathematics, computer science, law, policy, philosophy, and more, Spiess says. “The whole research agenda going forward should be interdisciplinary, and we wanted to contribute to that,” he says. “We need to come up with constraints we care about and ensure companies fulfill them so they’re not choosing their own formulation and running with it. As we know, that doesn’t produce outcomes that society thinks are fair.”

For media inquiries, visit the Newsroom.